An introduction to medieval cities and cloud security

Panorama of the Cité de Carcassonne by Chensiyuan CC BY-SA 4.0, from Wikimedia Commons

Sometimes, trust is THE most important resource - something vividly apparent when standing atop the towering walls of Carcassonne, a fortified city in south-western France. For centuries, its citizens were subject to countless sieges and invasion attempts, leaving them with little reason to trust anyone out on the open planes surrounding the city - and yet they had to exert some degree of trust in order to trade, to travel, to work on their farms and fields and to keep connections to other villages - in short, to function as a society.

The Carcassonnians solved this dilemma using five proven principles:

They established a zone of high trust. Rather than securing every house individually, they surrounded the city with massive, almost impenetrable walls. Everyone within was one of them. Everyone outside had to prove themselves to gain access.

They established an additional zone of lower trust for facilities requiring external exposure: Stables, blacksmiths and a guesthouse were build just outside the city walls - still surrounded by fences and within reach of the archers atop the wall, but also more easily accessible from the wider planes.

They disabled direct access and limited entry to access by proxy - carriages and traveling folk couldn't just pass through the gate, but had to traverse a gate-house, designed with winding paths, portcullises, and built-in defenses

They limited access to as few points as possible - in fact, all traffic in and out of the walled city had to pass through one of just two gates.

They established a clear set of checks and access criteria for entry, ranging from simple face-checks to recommendation letters and royal orders for outside visitors seeking to enter.

Fast forward a millennium and our threats have changed from sword-wielding invaders to keyboard-stroking hackers, our defenses from fortress walls to firewalls and VPNs - yet the underlying principles remain the same.

Basic principles of cloud security

In fact, above principles still form the basis of securing any AWS, Google Cloud, Azure or similar deployment - and can help us make sense of the jungle of VPCs, subnets, key stores, Identity Providers, Security Groups, NAT and Internet gateways required to do so.

It is important to stress that this list of principles is - and necessarily has to be - incomplete. Depending on the specific infrastructure, additional or entirely different steps might be necessary to secure an application.

So how can we utilize these principles to secure our cloud infrastructure?

1. Establishing a trusted zone

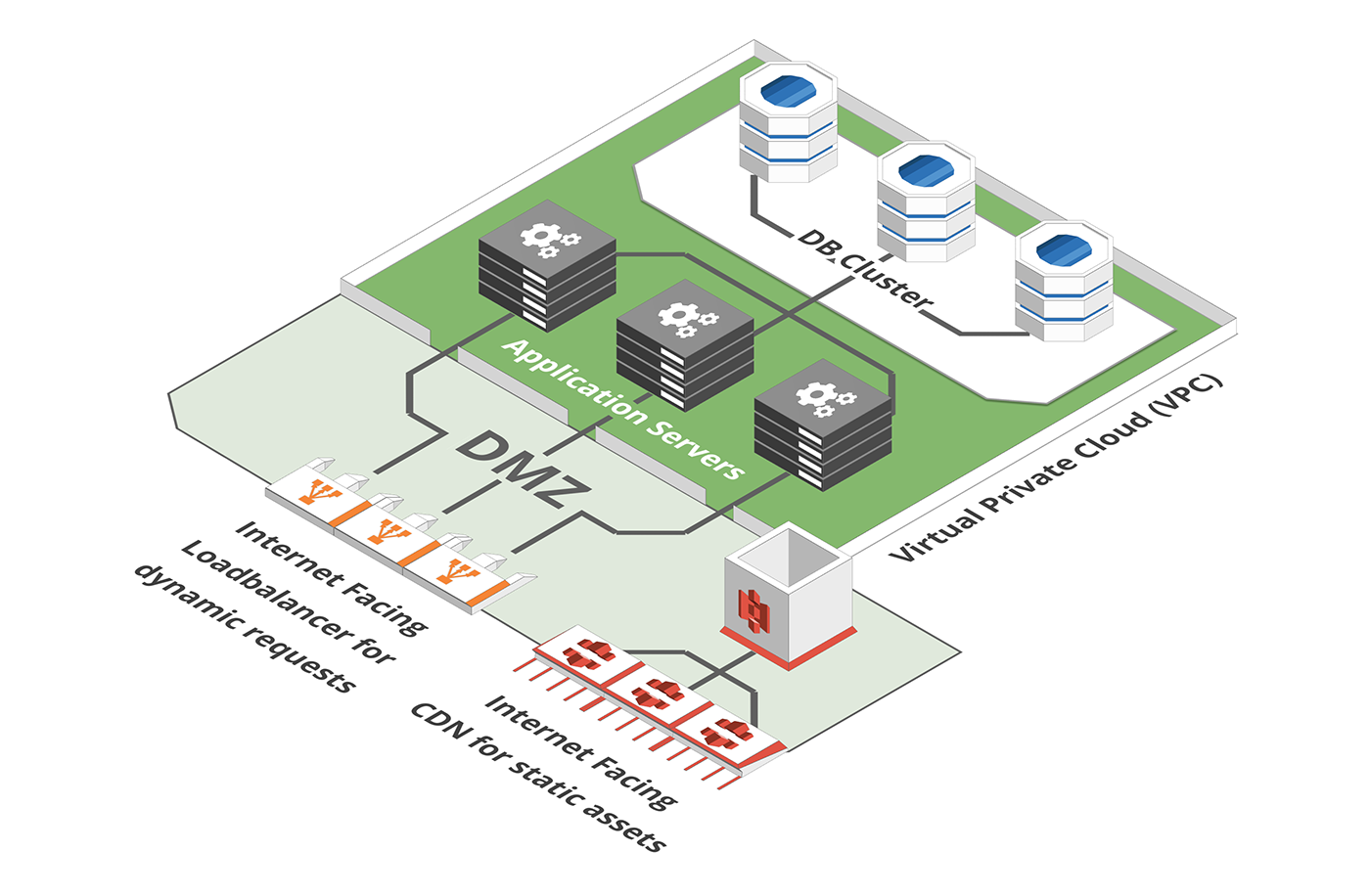

The public internet is a scary place and internet applications would be most secure if they had nothing to do with it. Unfortunately, they would also be fairly useless. Having said that, only a small subset of components actually need to be able to access the internet, all others, e.g. application logic, databases, caches, message queues etc. are best walled off from it. This is commonly achieved using a Virtual Private Network (VPN) or Virtual Private Cloud (VPC).

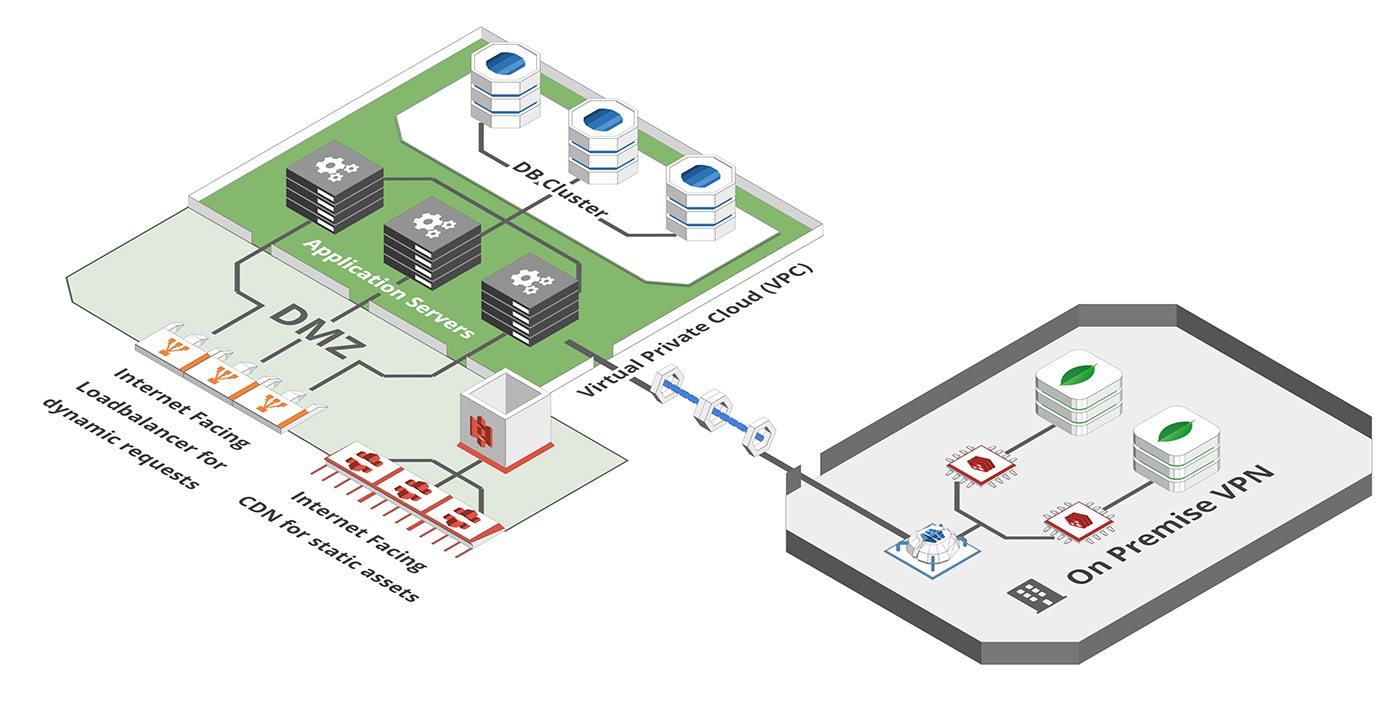

Such a VPC behaves like an isolated network, disconnected from the wider internet. The fact that this construct is purely virtual makes it extremely powerful. Your VPN/VPC doesn't have to be in the same physical location - it can go as far as combining your cloud provider and your on-premise computers, turning your globally distributed infrastructure into the easy-to-manage equivalent of a LAN-Party in your friend's basement.

VPCs can also be organized into multiple isolated zones called "subnets" - each with their own IP space and access settings. It is a common practice to have at least two of these subnets - a privat one which hosts all servers, databases etc, and a public one that can access the internet:

2. Create a zone with lower trust and higher accessibility

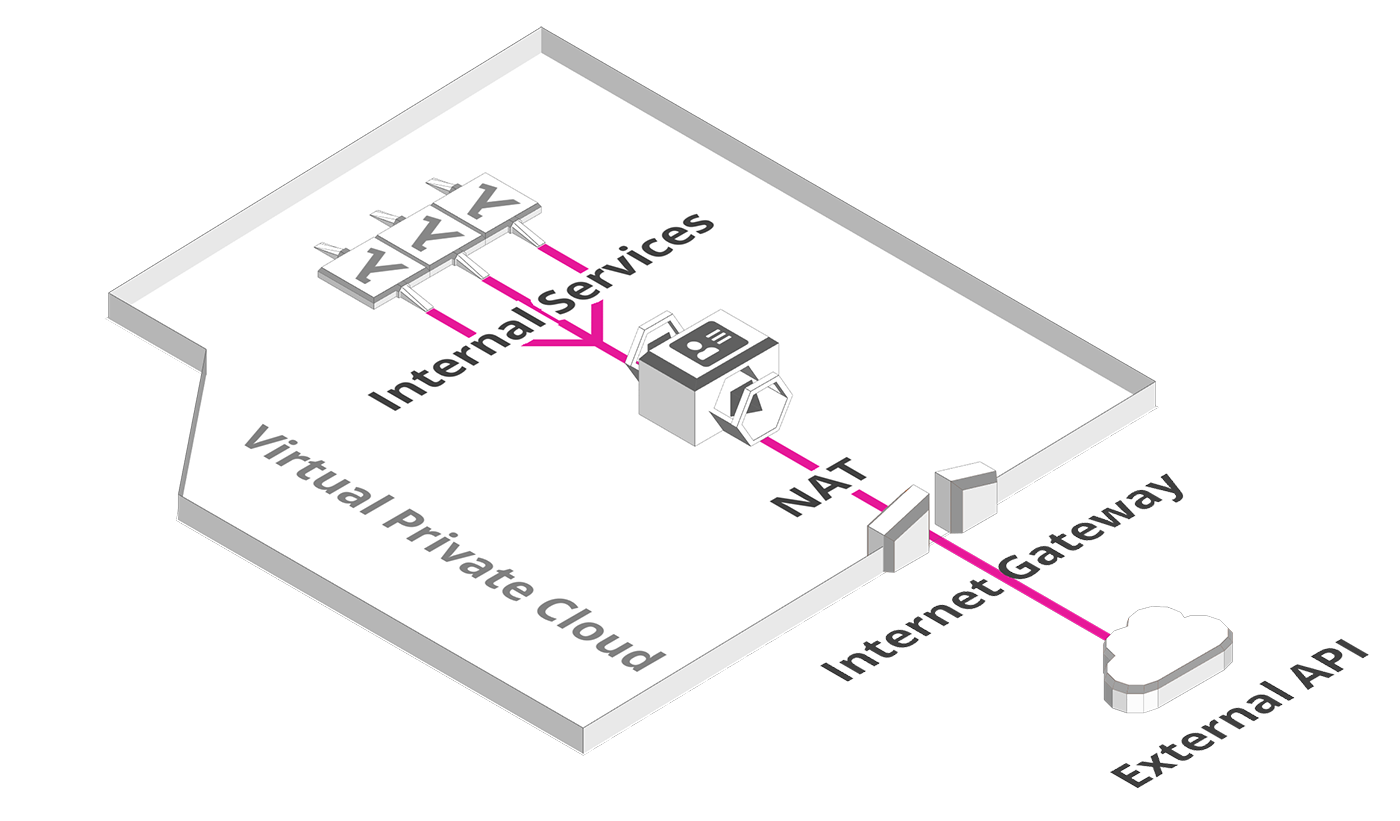

This second subnet usually hosts components that need to communicate outwards - e.g. web services that call external APIs. It can also be used as a DMZ (demilitarized zone) providing an isolated environment for web-facing services such as reverse proxies or static asset servers that process incoming requests.

A subnet uses two additional constructs to allow for internet access: A NAT (Network Address Translation) and an Internet Gateway. A NAT works a bit like the post room in an office building - it receives all incoming mail directed to the building address and routes it to the individual recipients on their desks. An Internet Gateway is just that - a gateway to the internet.

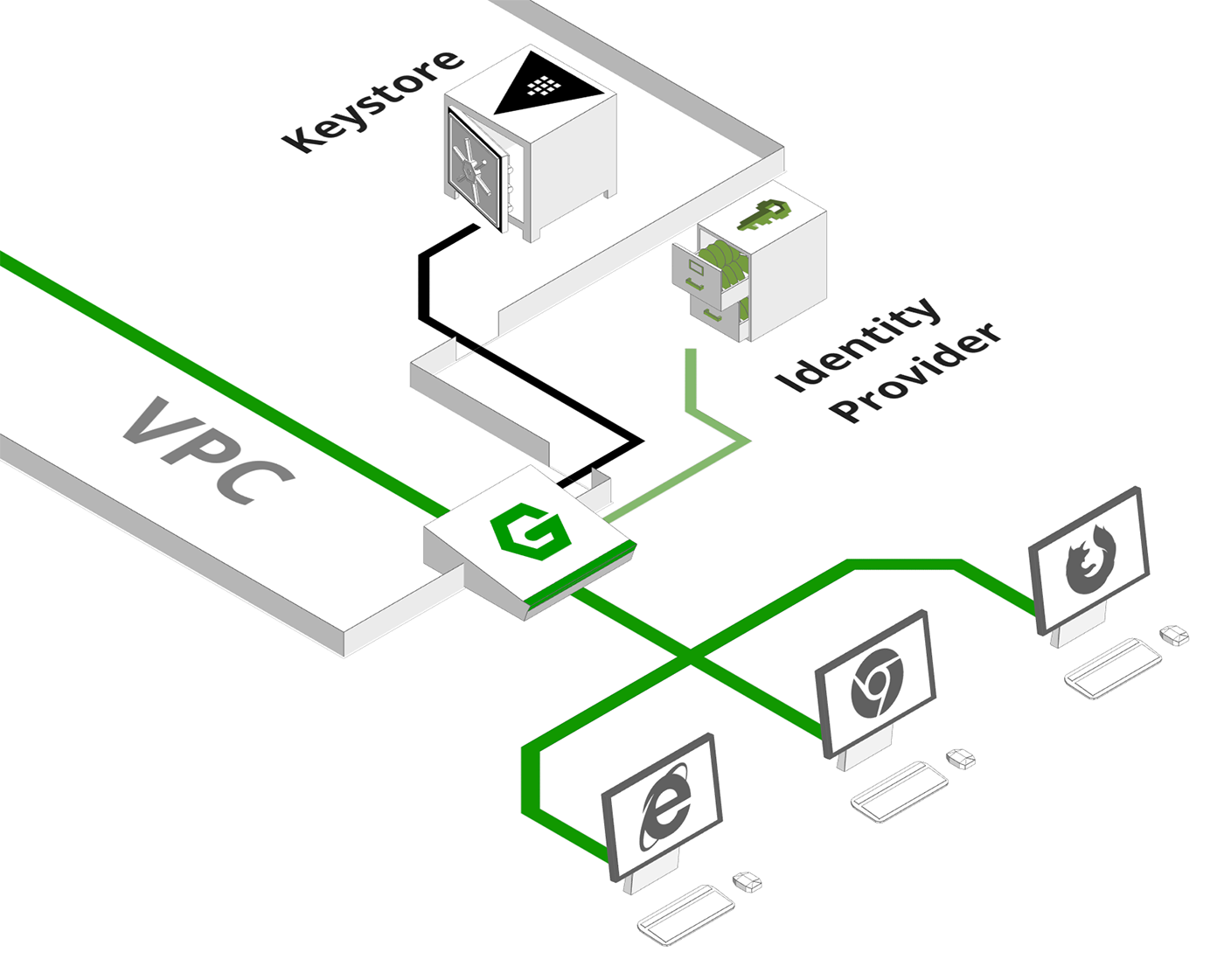

3. No direct access / access only by proxy

The internet facing subnet is usually home to a group of processes that receive requests from the internet and forward them towards our internal infrastructure. These come in many shapes and sizes, e.g. as reverse proxies, API gateways or load balancers - but they all have something in common: They act as the frontmost layer of defense and gatekeepers for incoming traffic. This can involve validating requests for syntactical errors, checking session tokens and credentials or evaluating permissions and access rights. The beauty of this approach is that invalid requests don't even enter the trusted section of the network.

4. As few access points as possible

But even with an isolated zone and gatekeepers, there are still vectors for attacks. Servers tend to expose admin GUIs and monitoring ports, machines might run background processes that create vulnerabilities and so on. To make sure that only fortified systems are publickly accessible it is common practice to close all access routes by default and only explicitly open those that are absolutely needed. This includes configuring security groups to limit traffic to specific IPs, only opening webfacing ports or using firewalls to pre-filter incoming connections based on complex criteria.

5. Access Checks

With only a single bottleneck for incoming requests to pass through, we've established a perfect checkpoint for credential validation. This is an essential yet complex task that would warrant its own blogpost - with countless self-built and premade solutions to choose from, ranging from third party oauth to cloud identity providers to enterprise level access control systems. For the purpose of this list, it suffices to say that the best network security doesn't help much if users can just walk through the front door.

Conclusion

With all the above steps in place, a system could be considered "secure" as far as common best practices are concerned. This is, however, a fairly limited definition of the term as all the above steps only protect from external attacks through the public internet.

Depending on corporate structure and security requirements it might be necessary to protect against attacks from within, e.g. the stealing of trade secrets as happened to Google's Waymo project or a disgruntled ex-employee looking to take the database down on their way out.

Beyond that, advancements in network security have given rise to social engineering attacks as Hackers are finding it increasingly easier to not even bother breaking a secure system, but rather just showing up in a blue overall and asking the receptionist for the way to the server room - thus leaving security as the endless struggle between measures and countermeasures it has been for medieval Carcassonne and probably will remain for all eternity.